Elevate your enterprise information technologies and approach at Transform 2021.

Facebook today announced that it educated an AI model to construct speech recognition systems that do not demand transcribed information. The enterprise, which educated systems for Swahili, Tatar, Kyrgyz, and other languages, claims that the model, wav2vec Unsupervised (Wav2vec-U), is an essential step toward constructing machines that can resolve a variety of tasks by mastering from their observations.

AI-powered speech transcription platforms are a dime a dozen in a market estimated to be worth more than $1.6 billion. Deepgram and Otter.ai construct voice recognition models for cloud-based true-time processing, even though Verbit provides tech not in contrast to that of Oto, which combines intonation with acoustic information to bolster speech understanding. Amazon, Google, Facebook, and Microsoft present their personal speech transcription services.

But the dominant type of AI for speech recognition falls into a category identified as supervised mastering. Supervised mastering is defined by its use of labeled datasets to train algorithms to classify information and predict outcomes, which, even though successful, is time-consuming and costly. Companies have to get tens of thousands of hours of audio and recruit human teams to manually transcribe the information. And this exact same course of action has to be repeated for every single language.

Unsupervised speech recognition

Facebook’s Wav2vec-U solves the challenges in supervised mastering by taking a self-supervised (also identified as unsupervised) strategy. With unsupervised mastering, Wav2vec-U is fed “unknown” information for which no previously defined labels exist. The technique ought to teach itself to classify the information, processing it to understand from its structure.

While reasonably underexplored in the speech domain, a expanding body of study demonstrates the possible of mastering from unlabeled information. Microsoft is utilizing unsupervised mastering to extract expertise about disruptions to its cloud services. More not too long ago, Facebook itself announced SEER, an unsupervised model educated on a billion photos that achieves state-of-the-art outcomes on a variety of laptop or computer vision benchmarks.

Wav2vec-U learns purely from recorded speech and text, eliminating the require for transcriptions. Using a self-supervised model and Facebook’s wav2vec 2. framework as effectively as what’s referred to as a clustering strategy, Wav2vec-U segments recordings into units that loosely correspond to unique sounds.

To understand to recognize words in a recording, Facebook educated a generative adversarial network (GAN) consisting of a generator and a discriminator. The generator requires audio segments and predicts a phoneme (i.e., unit of sound) corresponding to a sound in language. It’s educated by attempting to fool the discriminator, which assesses no matter if the predicted sequences look realistic. As for the discriminator, it learns to distinguish amongst the speech recognition output of the generator and true text from examples of text from sources that have been “phonemized,” in addition to the output of the generator.

While the GAN’s transitions are initially poor in excellent, they strengthen with the feedback of the discriminator.

“It takes about half a day — roughly 12 to 15 hours on a single GPU — to train an average Wav2vec-U model. This excludes self-supervised pre-training of the model, but we previously made these models publicly available for others to use,” Facebook AI study scientist manager Michael Auli told VentureBeat through e mail. “Half a day on a single GPU is not very much, and this makes the technology accessible to a wider audience to build speech technology for many more languages of the world.”

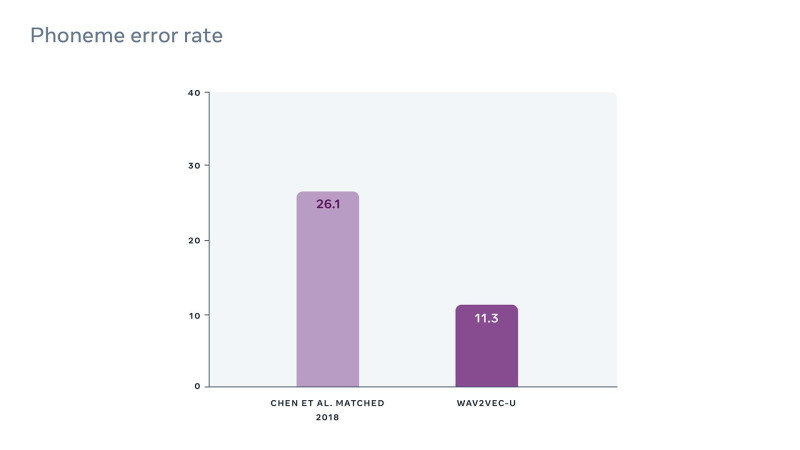

To get a sense of how effectively Wav2vec-U functions in practice, Facebook says it evaluated it initially on a benchmark referred to as TIMIT. Trained on as tiny as 9.6 hours of speech and 3,000 sentences of text information, Wav2vec-U lowered the error price by 63% compared with the next-most effective unsupervised strategy.

Wav2vec-U was also as correct as the state-of-the-art supervised speech recognition strategy from only a couple of years ago, which was educated on hundreds of hours of speech information.

Future work

AI has a effectively-identified bias dilemma, and unsupervised mastering does not remove the possible for bias in a system’s predictions. For instance, unsupervised laptop or computer vision systems can choose up racial and gender stereotypes present in coaching datasets. Some authorities, which includes Facebook chief scientist Yann LeCun, theorize that removing these biases may well demand a specialized coaching of unsupervised models with more, smaller sized datasets curated to “unteach” distinct biases.

Facebook acknowledges that more study ought to be carried out to figure out the most effective way to address bias. “We have not yet investigated potential biases in the model. Our focus was on developing a method to remove the need for supervision,” Auli stated. “A benefit of the self-supervised approach is that it may help avoid biases introduced through data labeling, but this is an important area that we are very interested in.”

In the meantime, Facebook is releasing the code for Wav2vec-U in open supply to allow developers to construct speech recognition systems utilizing unlabeled speech audio recordings and unlabeled text. While Facebook didn’t use user information for the study, Auli says that there’s possible for the model to assistance future internal and external tools, like video transcription.

“AI technologies like speech recognition should not benefit only people who are fluent in one of the world’s most widely spoken languages. Reducing our dependence on annotated data is an important part of expanding access to these tools,” Facebook wrote in a weblog post. “People learn many speech-related skills just by listening to others around them. This suggests that there is a better way to train speech recognition models, one that does not require large amounts of labeled data.”

/cdn.vox-cdn.com/uploads/chorus_asset/file/25547226/1242875577.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25546751/ES601_WEBR_GalleryImages_KitchenCounterLineUp_2048x2048.jpg)