Join gaming leaders on the web at GamesBeat Summit Next this upcoming November 9-10. Learn more about what comes next.

As game worlds develop more vast and complicated, producing sure they are playable and bug-cost-free is becoming increasingly tough for developers. And gaming providers are seeking for new tools, like artificial intelligence, to assist overcome the mounting challenge of testing their goods.

A new paper by a group of AI researchers at Electronic Arts shows that deep reinforcement finding out agents can assist test games and make sure they are balanced and solvable.

“Adversarial Reinforcement Learning for Procedural Content Generation,” the strategy presented by the EA researchers, is a novel method that addresses some of the shortcomings of preceding AI techniques for testing games.

Testing significant game environments

Webinar

Three major investment pros open up about what it requires to get your video game funded.

Watch On Demand

“Today’s big titles can have more than 1,000 developers and often ship cross-platform on PlayStation, Xbox, mobile, etc.,” Linus Gisslén, senior machine finding out analysis engineer at EA and lead author of the paper, told TechTalks. “Also, with the latest trend of open-world games and live service we see that a lot of content has to be procedurally generated at a scale that we previously have not seen in games. All this introduces a lot of ‘moving parts’ which all can create bugs in our games.”

Developers have at the moment two primary tools at their disposal to test their games: scripted bots and human play-testers. Human play-testers are extremely excellent at discovering bugs. But they can be slowed down immensely when dealing with vast environments. They can also get bored and distracted, particularly in a extremely massive game world. Scripted bots, on the other hand, are rapid and scalable. But they can not match the complexity of human testers and they execute poorly in significant environments such as open-world games, exactly where mindless exploration is not necessarily a thriving technique.

“Our goal is to use reinforcement learning (RL) as a method to merge the advantages of humans (self-learning, adaptive, and curious) with scripted bots (fast, cheap and scalable),” Gisslén stated.

Reinforcement finding out is a branch of machine finding out in which an AI agent tries to take actions that maximize its rewards in its atmosphere. For instance, in a game, the RL agent begins by taking random actions. Based on the rewards or punishments it receives from the atmosphere (staying alive, losing lives or overall health, earning points, finishing a level, and so on.), it develops an action policy that benefits in the finest outcomes.

Testing game content with adversarial reinforcement finding out

In the previous decade, AI analysis labs have applied reinforcement finding out to master complex games. More lately, gaming providers have also turn into interested in making use of reinforcement finding out and other machine finding out procedures in the game development lifecycle.

For instance, in game-testing, an RL agent can be educated to discover a game by letting it play on current content (maps, levels, and so on.). Once the agent masters the game, it can assist come across bugs in new maps. The issue with this method is that the RL program normally ends up overfitting on the maps it has seen in the course of education. This suggests that it will turn into extremely excellent at exploring these maps but terrible at testing new ones.

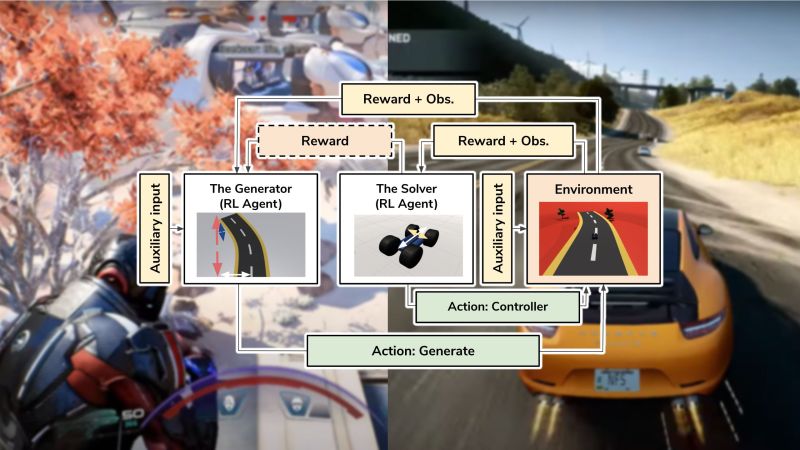

The strategy proposed by the EA researchers overcomes these limits with “adversarial reinforcement learning,” a strategy inspired by generative adversarial networks (GAN), a form of deep finding out architecture that pits two neural networks against each and every other to produce and detect synthetic information.

In adversarial reinforcement finding out, two RL agents compete and collaborate to produce and test game content. The very first agent, the Generator, makes use of procedural content generation (PCG), a strategy that automatically generates maps and other game components. The second agent, the Solver, tries to finish the levels the Generator creates.

There is a symbiosis amongst the two agents. The Solver is rewarded by taking actions that assist it pass the generated levels. The Generator, on the other hand, is rewarded for building levels that are difficult but not not possible to finish for the Solver. The feedback that the two agents provide each and every other enables them to turn into improved at their respective tasks as the education progresses.

The generation of levels requires location in a step-by-step style. For instance, if the adversarial reinforcement finding out program is getting applied for a platform game, the Generator creates one game block and moves on to the next one immediately after the Solver manages to attain it.

“Using an adversarial RL agent is a vetted method in other fields, and is often needed to enable the agent to reach its full potential,” Gisslén stated. “For example, DeepMind used a version of this when they let their Go agent play against different versions of itself in order to achieve super-human results. We use it as a tool for challenging the RL agent in training to become more general, meaning that it will be more robust to changes that happen in the environment, which is often the case in game-play testing where an environment can change on a daily basis.”

Gradually, the Generator will discover to produce a range of solvable environments, and the Solver will turn into more versatile in testing unique environments.

A robust game-testing reinforcement finding out program can be extremely helpful. For instance, a lot of games have tools that enable players to produce their personal levels and environments. A Solver agent that has been educated on a range of PCG-generated levels will be a lot more effective at testing the playability of user-generated content than conventional bots.

One of the exciting particulars in the adversarial reinforcement finding out paper is the introduction of “auxiliary inputs.” This is a side-channel that impacts the rewards of the Generator and enables the game developers to manage its discovered behavior. In the paper, the researchers show how the auxiliary input can be used to manage the difficulty of the levels generated by the AI program.

EA’s AI analysis group applied the strategy to a platform and a racing game. In the platform game, the Generator steadily areas blocks from the beginning point to the objective. The Solver is the player and have to jump from block to block till it reaches the objective. In the racing game, the Generator areas the segments of the track, and the Solver drives the automobile to the finish line.

The researchers show that by making use of the adversarial reinforcement finding out program and tuning the auxiliary input, they have been capable to manage and adjust the generated game atmosphere at unique levels.

Their experiments also show that a Solver educated with adversarial machine finding out is a lot more robust than conventional game-testing bots or RL agents that have been educated with fixed maps.

Applying adversarial reinforcement finding out to true games

The paper does not provide a detailed explanation of the architecture the researchers applied for the reinforcement finding out program. The tiny information and facts that is in there shows that the the Generator and Solver use very simple, two-layer neural networks with 512 units, which should really not be extremely expensive to train. However, the instance games that the paper contains are extremely very simple, and the architecture of the reinforcement finding out program should really differ based on the complexity of the atmosphere and action-space of the target game.

“We tend to take a pragmatic approach and try to keep the training cost at a minimum as this has to be a viable option when it comes to ROI for our QV (Quality Verification) teams,” Gisslén stated. “We try to keep the skill range of each trained agent to just include one skill/objective (e.g., navigation or target selection) as having multiple skills/objectives scales very poorly, causing the models to be very expensive to train.”

The work is nonetheless in the analysis stage, Konrad Tollmar, analysis director at EA and co-author of the paper, told TechTalks. “But we’re having collaborations with various game studios across EA to explore if this is a viable approach for their needs. Overall, I’m truly optimistic that ML is a technique that will be a standard tool in any QV team in the future in some shape or form,” he stated.

Adversarial reinforcement finding out agents can assist human testers focus on evaluating components of the game that can not be tested with automated systems, the researchers think.

“Our vision is that we can unlock the potential of human playtesters by moving from mundane and repetitive tasks, like finding bugs where the players can get stuck or fall through the ground, to more interesting use-cases like testing game-balance, meta-game, and ‘funness,’” Gisslén stated. “These are things that we don’t see RL agents doing in the near future but are immensely important to games and game production, so we don’t want to spend human resources doing basic testing.”

The RL program can turn into an significant component of building game content, as it will allow designers to evaluate the playability of their environments as they produce them. In a video that accompanies their paper, the researchers show how a level designer can get assist from the RL agent in true-time though putting blocks for a platform game.

Eventually, this and other AI systems can turn into an significant component of content and asset creation, Tollmar believes.

“The tech is still new and we still have a lot of work to be done in production pipeline, game engine, in-house expertise, etc. before this can fully take off,” he stated. “However, with the current research, EA will be ready when AI/ML becomes a mainstream technology that is used across the gaming industry.”

As analysis in the field continues to advance, AI can ultimately play a more significant function in other components of game development and gaming knowledge.

“I think as the technology matures and acceptance and expertise grows within gaming companies this will be not only something that is used within testing but also as game-AI whether it is collaborative, opponent, or NPC game-AI,” Tollmar stated. “A fully trained testing agent can of course also be imagined being a character in a shipped game that you can play against or collaborate with.”

Ben Dickson is a software program engineer and the founder of TechTalks. He writes about technologies, company, and politics.

This story initially appeared on Bdtechtalks.com. Copyright 2021

/cdn.vox-cdn.com/uploads/chorus_asset/file/25547838/YAKZA_3840_2160_A_Elogo.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25547226/1242875577.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25546751/ES601_WEBR_GalleryImages_KitchenCounterLineUp_2048x2048.jpg)