Join Transform 2021 this July 12-16. Register for the AI occasion of the year.

A new neural network architecture created by artificial intelligence researchers at DarwinAI and the University of Waterloo will make it feasible to carry out image segmentation on computing devices with low-energy and -compute capacity.

Segmentation is the method of figuring out the boundaries and places of objects in pictures. We humans carry out segmentation with no conscious work, but it remains a essential challenge for machine finding out systems. It is essential to the functionality of mobile robots, self-driving automobiles, and other artificial intelligence systems that have to interact and navigate the genuine world.

Until not too long ago, segmentation necessary substantial, compute-intensive neural networks. This made it complicated to run these deep finding out models without a connection to cloud servers.

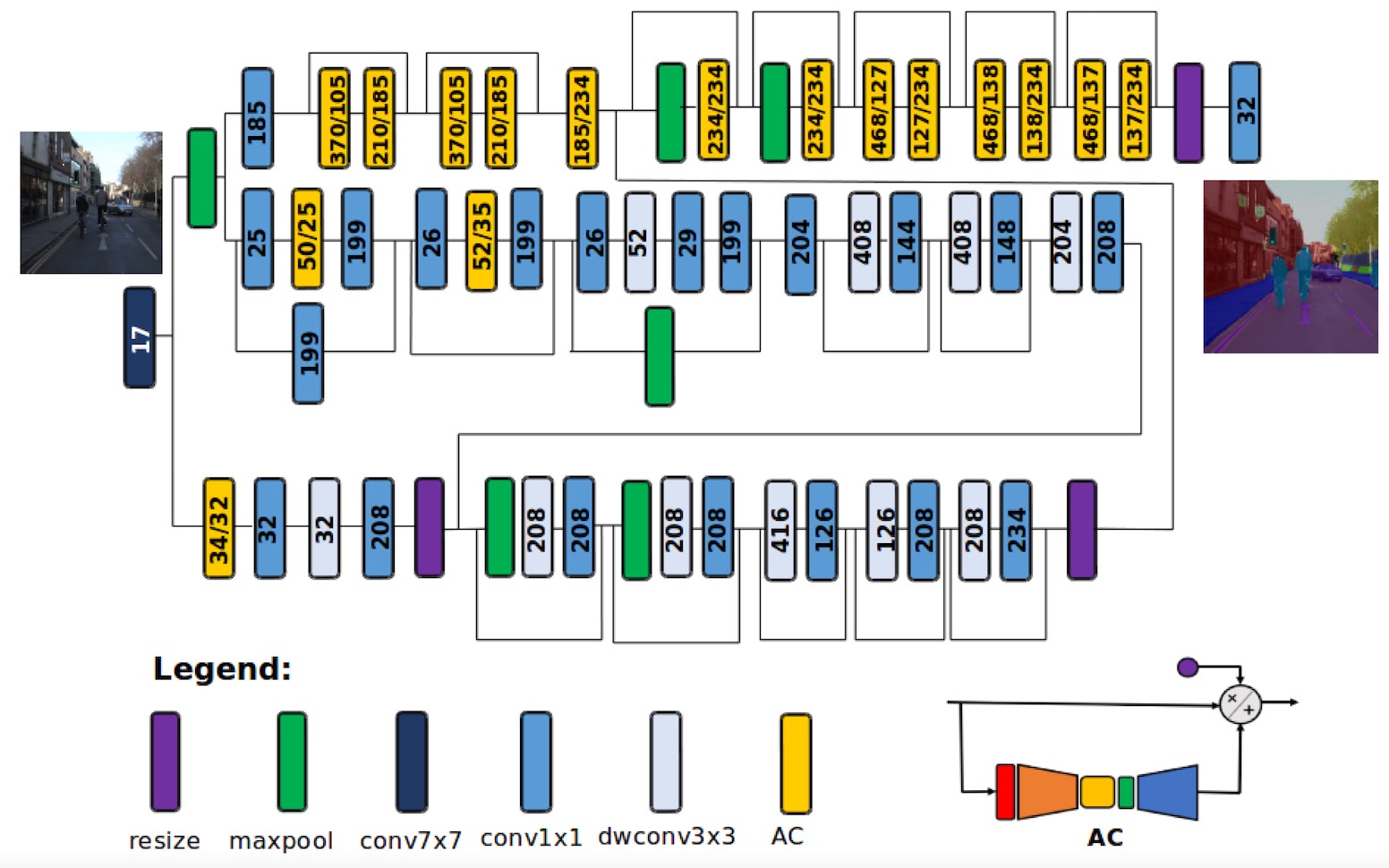

In their newest work, the scientists at DarwinAI and the University of Waterloo have managed to make a neural network that supplies close to-optimal segmentation and is tiny sufficient to match on resource-constrained devices. Called AttendSeg, the neural network is detailed in a paper that has been accepted at this year’s Conference on Computer Vision and Pattern Recognition (CVPR).

Object classification, detection, and segmentation

One of the essential factors for the expanding interest in machine finding out systems is the difficulties they can resolve in computer vision. Some of the most popular applications of machine finding out in computer system vision include things like image classification, object detection, and segmentation.

Image classification determines no matter if a specific variety of object is present in an image or not. Object detection requires image classification one step additional and supplies the bounding box exactly where detected objects are positioned.

Segmentation comes in two flavors: semantic segmentation and instance segmentation. Semantic segmentation specifies the object class of each and every pixel in an input image. Instance segmentation separates person situations of each and every variety of object. For sensible purposes, the output of segmentation networks is commonly presented by coloring pixels. Segmentation is by far the most difficult variety of classification process.

The complexity of convolutional neural networks (CNN), the deep finding out architecture typically employed in computer system vision tasks, is commonly measured in the quantity of parameters they have. The more parameters a neural network has the bigger memory and computational energy it will need.

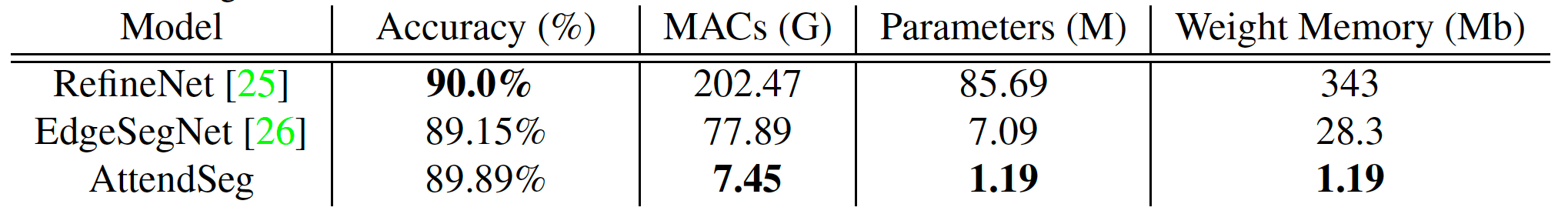

RefineNet, a preferred semantic segmentation neural network, consists of more than 85 million parameters. At 4 bytes per parameter, it indicates that an application working with RefineNet needs at least 340 megabytes of memory just to run the neural network. And offered that the overall performance of neural networks is largely dependent on hardware that can carry out rapidly matrix multiplications, it indicates that the model have to be loaded on the graphics card or some other parallel computing unit, exactly where memory is more scarce than the computer’s RAM.

Machine finding out for edge devices

Due to their hardware specifications, most applications of image segmentation will need an world-wide-web connection to send pictures to a cloud server that can run substantial deep finding out models. The cloud connection can pose more limits to exactly where image segmentation can be employed. For instance, if a drone or robot will be operating in environments exactly where there’s no world-wide-web connection, then performing image segmentation will develop into a difficult process. In other domains, AI agents will be working in sensitive environments and sending pictures to the cloud will be topic to privacy and safety constraints. The lag triggered by the roundtrip to the cloud can be prohibitive in applications that need genuine-time response from the machine finding out models. And it is worth noting that network hardware itself consumes a lot of energy, and sending a continual stream of pictures to the cloud can be taxing for battery-powered devices.

For all these factors (and a couple of more), edge AI and tiny machine finding out (TinyML) have develop into hot places of interest and investigation each in academia and in the applied AI sector. The target of TinyML is to make machine finding out models that can run on memory- and energy-constrained devices with no the will need for a connection to the cloud.

With AttendSeg, the researchers at DarwinAI and the University of Waterloo attempted to address the challenges of on-device semantic segmentation.

“The idea for AttendSeg was driven by both our desire to advance the field of TinyML and market needs that we have seen as DarwinAI,” Alexander Wong, co-founder at DarwinAI and Associate Professor at the University of Waterloo, told TechTalks. “There are numerous industrial applications for highly efficient edge-ready segmentation approaches, and that’s the kind of feedback along with market needs that I see that drives such research.”

The paper describes AttendSeg as “a low-precision, highly compact deep semantic segmentation network tailored for TinyML applications.”

The AttendSeg deep finding out model performs semantic segmentation at an accuracy that is just about on-par with RefineNet when cutting down the quantity of parameters to 1.19 million. Interestingly, the researchers also located that lowering the precision of the parameters from 32 bits (4 bytes) to 8 bits (1 byte) did not outcome in a considerable overall performance penalty when enabling them to shrink the memory footprint of AttendSeg by a issue of 4. The model needs tiny above one megabyte of memory, which is tiny sufficient to match on most edge devices.

“[8-bit parameters] do not pose a limit in terms of generalizability of the network based on our experiments, and illustrate that low precision representation can be quite beneficial in such cases (you only have to use as much precision as needed),” Wong stated.

Attention condensers for computer system vision

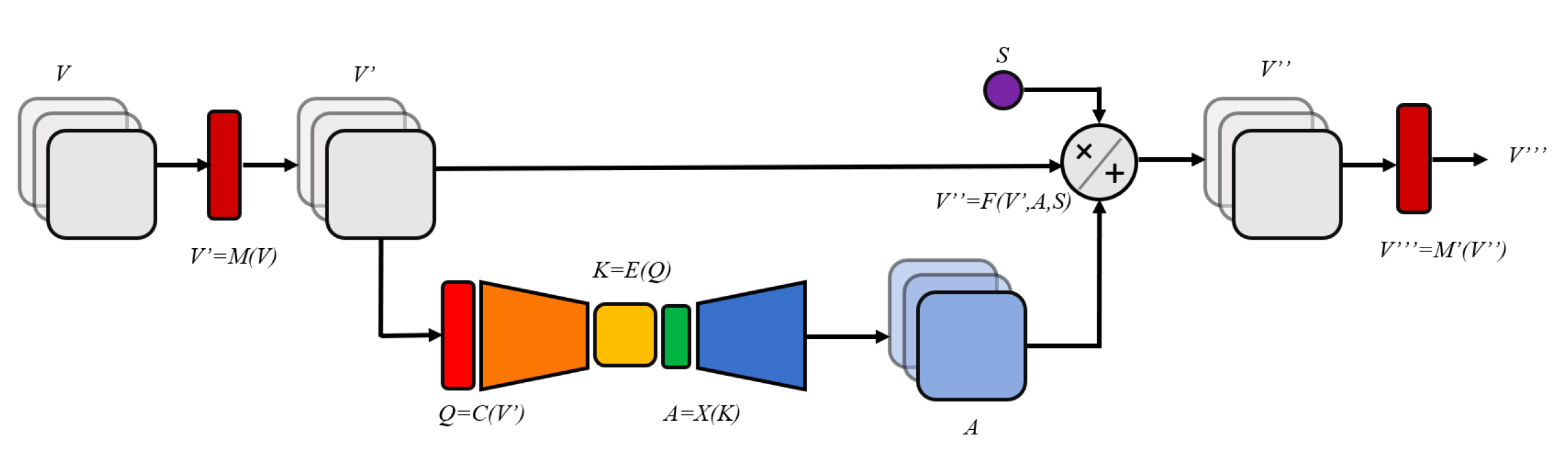

AttendSeg leverages “attention condensers” to minimize model size with no compromising overall performance. Self-consideration mechanisms are a series that enhance the efficiency of neural networks by focusing on facts that matters. Self-consideration approaches have been a boon to the field of natural language processing. They have been a defining issue in the accomplishment of deep finding out architectures such as Transformers. While earlier architectures such as recurrent neural networks had a restricted capacity on lengthy sequences of information, Transformers employed self-consideration mechanisms to expand their variety. Deep finding out models such as GPT-3 leverage Transformers and self-consideration to churn out lengthy strings of text that (at least superficially) sustain coherence more than lengthy spans.

AI researchers have also leveraged consideration mechanisms to enhance the overall performance of convolutional neural networks. Last year, Wong and his colleagues introduced consideration condensers as a extremely resource-effective consideration mechanism and applied them to image classifier machine finding out models.

“[Attention condensers] allow for very compact deep neural network architectures that can still achieve high performance, making them very well suited for edge/TinyML applications,” Wong stated.

Machine-driven style of neural networks

One of the essential challenges of designing TinyML neural networks is locating the finest performing architecture when also adhering to the computational price range of the target device.

To address this challenge, the researchers employed “generative synthesis,” a machine finding out method that creates neural network architectures based on specified objectives and constraints. Basically, as an alternative of manually fiddling with all sorts of configurations and architectures, the researchers provide a challenge space to the machine finding out model and let it learn the finest mixture.

“The machine-driven design process leveraged here (Generative Synthesis) requires the human to provide an initial design prototype and human-specified desired operational requirements (e.g., size, accuracy, etc.) and the MD design process takes over in learning from it and generating the optimal architecture design tailored around the operational requirements and task and data at hand,” Wong stated.

For their experiments, the researchers employed machine-driven style to tune AttendSeg for Nvidia Jetson, hardware kits for robotics and edge AI applications. But AttendSeg is not restricted to Jetson.

“Essentially, the AttendSeg neural network will run fast on most edge hardware compared to previously proposed networks in literature,” Wong stated. “However, if you want to generate an AttendSeg that is even more tailored for a particular piece of hardware, the machine-driven design exploration approach can be used to create a new highly customized network for it.”

AttendSeg has apparent applications for autonomous drones, robots, and automobiles, exactly where semantic segmentation is a essential requirement for navigation. But on-device segmentation can have quite a few more applications.

“This type of highly compact, highly efficient segmentation neural network can be used for a wide variety of things, ranging from manufacturing applications (e.g., parts inspection / quality assessment, robotic control) medical applications (e.g., cell analysis, tumor segmentation), satellite remote sensing applications (e.g., land cover segmentation), and mobile application (e.g., human segmentation for augmented reality),” Wong stated.

Ben Dickson is a software program engineer and the founder of TechTalks. He writes about technologies, company, and politics.

This story initially appeared on Bdtechtalks.com. Copyright 2021

/cdn.vox-cdn.com/uploads/chorus_asset/file/25547226/1242875577.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25546751/ES601_WEBR_GalleryImages_KitchenCounterLineUp_2048x2048.jpg)