All the sessions from Transform 2021 are readily available on-demand now. Watch now.

Facebook today open-sourced Droidlet, a platform for constructing robots that leverage all-natural language processing and personal computer vision to recognize the world about them. Droidlet simplifies the integration of machine understanding algorithms in robots, according to Facebook, facilitating fast software program prototyping.

Robots today can be choreographed to vacuum the floor or execute a dance, but they struggle to achieve substantially more than that. This is since they fail to method facts at a deep level. Robots cannot recognize what a chair is or know that bumping into a spilled soda can will make a larger mess, for instance.

Droidlet is not a be-all and finish-all option to the dilemma, but rather a way to test out distinctive personal computer vision and all-natural language processing models. It makes it possible for researchers to create systems that can achieve tasks in the genuine world or in simulated environments like Minecraft or Facebook’s Habitat, supporting the use of the similar program on distinctive robotics by swapping out elements as required. The platform offers a dashboard researchers can add debugging and visualization widgets and tools to, as nicely as an interface for correcting errors and annotation. And Droidlet ships with wrappers for connecting machine understanding models to robots, in addition to environments for testing vision models fine-tuned for the robot setting.

Modular design and style

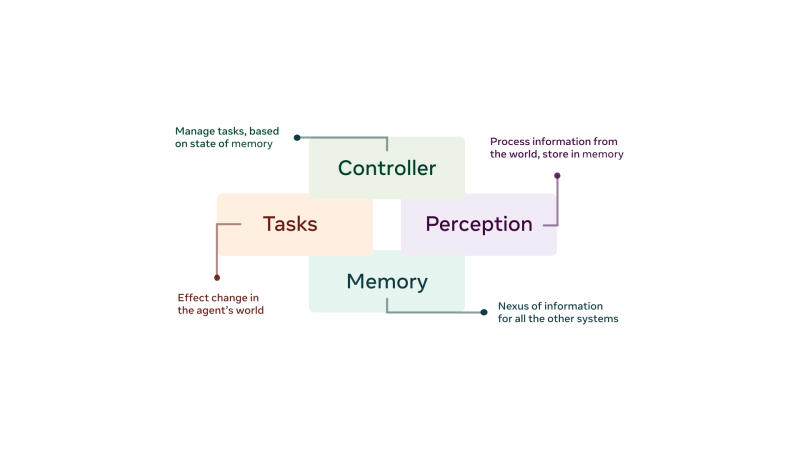

Droidlet is made up of a collection of elements — some heuristic, some discovered — that can be educated with static information when handy or dynamic information exactly where suitable. The design and style consists of quite a few module-to-module interfaces:

- A memory program that acts as a shop for facts across the different modules

- A set of perceptual modules that method facts from the outdoors world and shop it in memory

- A set of reduce-level tasks, such as “Move three feet forward” and “Place item in hand at given coordinates,” that can impact adjustments in a robot’s atmosphere

- A controller that decides which tasks to execute based on the state of the memory program

Each of these modules can be additional broken down into trainable or heuristic elements, Facebook says, and the modules and dashboards can be made use of outdoors of the Droidlet ecosystem. For researchers and hobbyists, Droidlet also gives “battery-included” systems that can perceive their atmosphere by means of pretrained object detection and pose estimation models and shop their observations in the robot’s memory. Using this representation, the systems can respond to language commands like “Go to the red chair,” tapping a pretrained neural semantic parser that converts all-natural language into applications.

“The Droidlet platform supports researchers building embodied agents more generally by reducing friction in integrating machine learning models and new capabilities, whether scripted or learned, into their systems, and by providing user experiences for human-agent interaction and data annotation,” Facebook wrote in a weblog post. “As more researchers build with Droidlet, they will improve its existing components and add new ones, which others in turn can then add to their own robotics projects … With Droidlet, robotics researchers can now take advantage of the significant recent progress across the field of AI and build machines that can effectively respond to complex spoken commands like ‘Pick up the blue tube next to the fuzzy chair that Bob is sitting in.’”

/cdn.vox-cdn.com/uploads/chorus_asset/file/23262657/VRG_Illo_STK001_B_Sala_Hacker.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25417841/TLC_Q6_Lifestyle_Press_Image.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25184512/111323_PlayStation_Portal_ADiBenedetto_0010.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25418195/04242024_Hollywood_Con_Queen_Post_big_image_post.jpg.large_2x.jpg)