Elevate your enterprise information technologies and technique at Transform 2021.

Humans recognize events in the world contextually, performing what’s known as multimodal reasoning across time to make inferences about the previous, present, and future. Given text and an image that appears innocuous when thought of separately — e.g., “Look how many people love you” and a image of a barren desert — persons recognize that these components take on potentially hurtful connotations when they’re paired or juxtaposed, for instance.

Even the very best AI systems struggle in this region. But there’s been progress, most not too long ago from a group at the Allen Institute for Artificial Intelligence and the University of Washington’s Paul G. Allen School of Computer Science & Engineering. In a preprint paper published this month, the researchers detail Multimodal Neural Script Knowledge Models (Merlot), a method that learns to match photos in videos with words and even stick to events globally more than time by watching millions of YouTube videos with transcribed speech. It does all this in an unsupervised manner, which means the videos haven’t been labeled or categorized — forcing the method to find out from the videos’ inherent structures.

Learning from videos

Our capacity for commonsense reasoning is shaped by how we expertise causes and effects. Teaching machines this variety of “script knowledge” is a important challenge, in component simply because of the quantity of information it calls for. For instance, even a single photo of persons dining at a restaurant can imply a wealth of information and facts, like the truth that the persons had to agree exactly where to go, meet up, and enter the restaurant just before sitting down.

Merlot attempts to internalize these ideas by watching YouTube videos. Lots of YouTube videos. Drawing on a dataset of 6 million videos, the researchers educated the model to match person frames with a contextualized representation of the video transcripts, divided into segments. The dataset contained instructional videos, life style vlogs of every day events, and YouTube’s auto-recommended videos for well known subjects like “science” and “home improvement,” every chosen explicitly to encourage the model to find out about a broad variety of objects, actions, and scenes.

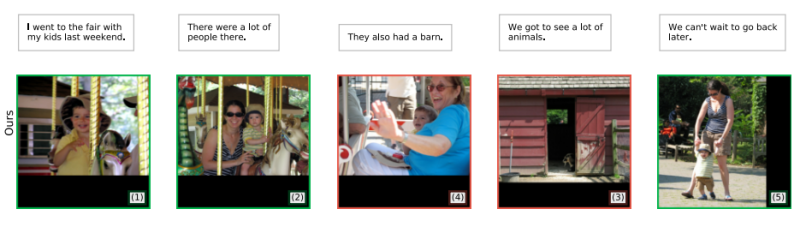

The objective was to teach Merlot to contextualize the frame-level representations more than time and more than spoken words so it could reorder scrambled video frames and make sense of “noisy” transcripts — such as these with erroneously lowercase text, missing punctuation, and filler words like “umm,” “hmm,” and “yeah.” The researchers largely achieved this. They reported that in a series of qualitative and quantitative tests, Merlot had a robust “out-of-the-box” understanding of every day events and conditions, enabling it to take a scrambled sequence of events from a video and order the frames to match the captions in a coherent narrative, like persons riding a carousel.

Future work

Merlot is only the newest work on video understanding in the AI investigation neighborhood. In 2019, researchers at Georgia Institute of Technology and the University of Alberta developed a method that could automatically produce commentary for “let’s play” videos of video games. More not too long ago, researchers at Microsoft published a preprint paper describing a method that could identify no matter whether statements about video clips have been correct by finding out from visual and textual clues. And Facebook has educated a pc vision method that can automatically find out audio, textual, and visual representations from publicly readily available Facebook videos.

The Allen Institute and University of Washington researchers note that, like prior work, Merlot has limitations, some owing to the information chosen to train the model. For instance, Merlot could exhibit undesirable biases simply because it was only educated on English information and largely neighborhood news segments, which can invest a lot of time covering crime stories in a sensationalized way. It’s “very likely” that instruction models like Merlot on mainly news content could trigger them to find out racist patterns as nicely as sexist patterns, the researchers concede, provided that the most well known YouTubers in most nations are guys. Studies have demonstrated a correlation involving watching neighborhood news and possessing more explicit, racialized beliefs about crime.

For these factors, the group advises against deploying Merlot into a production atmosphere. But they say Merlot is nevertheless a promising step toward future work in multimodal understanding. “We hope that Merlot can inspire future work for learning vision+language representations in a more humanlike fashion compared to learning from literal captions and their corresponding images,” the coauthors wrote. “The model achieves strong performance on tasks requiring event-level reasoning over videos and static images.”

/cdn.vox-cdn.com/uploads/chorus_asset/file/25408771/PhishSphere2024_0418_225029_0799_ALIVECOVERAGE_Enhanced_NR.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/9594885/jetsons.jpg)