The Transform Technology Summits start out October 13th with Low-Code/No Code: Enabling Enterprise Agility. Register now!

Cerebras Systems mentioned its CS-2 Wafer Scale Engine 2 processor is a “brain-scale” chip that can energy AI models with more than 120 trillion parameters.

Parameters are the component of a machine-understanding algorithm that is discovered from historical education information in a neutral network. The more parameters, the more sophisticated the AI model. And that is why Cerebras believes its most up-to-date processor — which is in fact constructed on a wafer rather of just person chips — is going to be so potent, CEO Andrew Feldman mentioned in an interview with VentureBeat.

Feldman gave us a preview of his speak at the Hot Chips semiconductor design and style conference that is becoming held on-line today. The 120 trillion parameters news follows an announcement Google researchers touted back in January — that they had educated a model with a total of 1.6 trillion parameters. Feldman noted that Google had raised the quantity of parameters about 1,000 instances in just two years.

“The number of parameters, the amount of memory necessary, have grown exponentially,” Fedlman mentioned. “We have 1,000 times larger models requiring more than 1,000 times more compute, and that has happened in two years. We are announcing our ability to support up to 120 trillion parameters, to cluster 192 CS-2s together. Not only are we building bigger and faster clusters, but we’re making those clusters more efficient.”

Image Credit: Cerebras

Feldman mentioned the tech will expand the size of the biggest AI neural networks by one hundred instances.

“Larger networks, such as GPT-3, have already transformed the natural language processing (NLP) landscape, making possible what was previously unimaginable,” he mentioned. “The industry is moving past a trillion parameter models, and we are extending that boundary by two orders of magnitude, enabling brain-scale neural networks with 120 trillion parameters.”

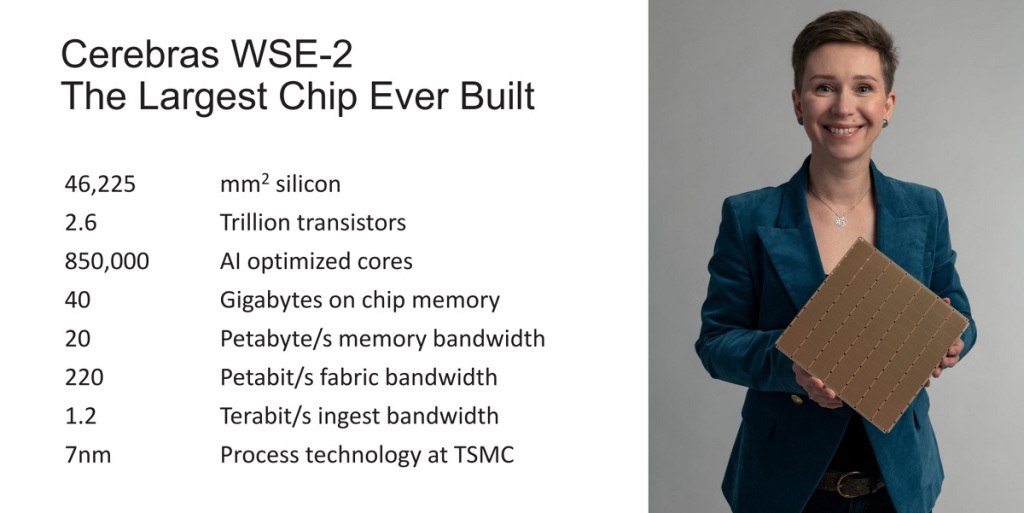

Feldman mentioned the Cerebras CS-2 is powered by the Wafer Scale Engine (WSE-2), the biggest chip ever made and the quickest AI processor to date. Purpose-constructed for AI work, the 7-nanometer WSE-2 has 2.6 trillion transistors and 850,000 AI-optimized cores. By comparison, the biggest graphics processing unit has only 54 billion transistors, 2.55 trillion fewer transistors than the WSE-2. The WSE-2 also has 123 instances more cores and 1,000 instances more higher-efficiency on-chip memory than graphic processing unit competitors.

The Cerebras wafers

Image Credit: Cerebras

The CS-2 is constructed for supercomputing tasks, and it is the second time given that 2019 that Los Altos, California-based Cerebras has unveiled a chip that is essentially an whole wafer.

Chipmakers typically slice a wafer from a 12-inch-diameter ingot of silicon to course of action in a chip factory. Once processed, the wafer is sliced into hundreds of separate chips that can be made use of in electronic hardware.

But Cerebras, began by Feldman — who also founded SeaMicro — requires that wafer and tends to make a single, huge chip out of it. Each piece of the chip, dubbed a core, is interconnected in a sophisticated way to other cores. The interconnections are developed to maintain all the cores functioning at higher speeds so the transistors can work collectively as one. AI was used to design and style the actual chip itself, Synopsys CEO Aart De Geus said in an interview with VentureBeat.

Cerebras puts these wafers in a standard datacenter computing rack and connects them all collectively.

Brain-scale computing

Image Credit: Cerebras

To make a comparison, Feldman noted that the human brain consists of on the order of one hundred trillion synapses. As noted, the biggest AI hardware clusters had been on the order of 1% of a human brain’s scale, or about 1 trillion synapse equivalents, or parameters. At only a fraction of complete human brain-scale, these clusters of graphics processors consume acres of space and megawatts of energy and demand committed teams to operate.

But Feldman mentioned a single CS-2 accelerator — the size of a dorm area refrigerator — can help models of more than 120 trillion parameters in size.

Four innovations

Image Credit: Cerebras

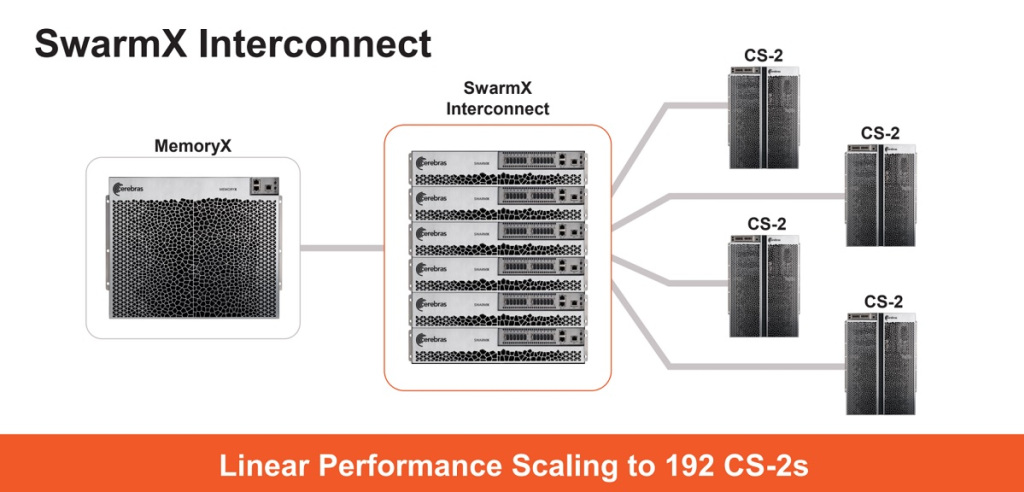

On top rated of that, he mentioned Cerebras’ new technologies portfolio consists of 4 innovations: Cerebras Weight Streaming, a new computer software execution architecture Cerebras MemoryX, a memory extension technologies Cerebras SwarmX, a higher-efficiency interconnect fabric technologies and Selectable Sparsity, a dynamic sparsity harvesting technologies.

The Cerebras Weight Streaming technologies can retailer model parameters off-chip though delivering the identical education and inference efficiency as if they had been on-chip. This new execution model disaggregates compute and parameter storage — enabling researchers to flexibly scale size and speed independently — and eliminates the latency and memory bandwidth challenges that challenge huge clusters of smaller processors.

This considerably simplifies the workload distribution model and is developed so customers can scale from employing 1 to up to 192 CS-2s with no

computer software modifications, Feldman mentioned.

The Cerebras MemoryX will provide the second-generation Cerebras Wafer Scale Engine (WSE-2) up to 2.4 petabytes of higher-efficiency memory, all of which behave as if they had been on-chip. With MemoryX, CS-2 can help models with up to 120 trillion parameters.

Cerebras SwarmX is a higher-efficiency, AI-optimized communication fabric that extends the Cerebras Swarm on-chip fabric to off-chip. SwarmX is developed to allow Cerebras to connect up to 163 million AI-optimized cores across up to 192 CS-2s, working in concert to train a single neural network.

And Selectable Sparsity enables customers to choose the level of weight sparsity in their model and delivers a direct reduction in FLOPs and time-to-answer. Weight sparsity is an fascinating location of ML study that has been difficult to study, as it is really inefficient on graphics processing units.

Selectable sparsity enables the CS-2 to accelerate work and use just about every readily available kind of sparsity — such as unstructured and dynamic weight sparsity — to create answers in significantly less time.

This mixture of technologies will enable customers to unlock brain-scale neural networks and distribute work more than huge clusters of AI-optimized cores with push-button ease, Feldman mentioned.

Rick Stevens, associate director at the federal Argonne National Laboratory, mentioned in a statement that the last numerous years have shown that the more the parameters, the much better the outcomes for organic language processing models. Cerebras’ inventions could transform the sector, he mentioned.

Founded in 2016, Cerebras has more than 350 personnel. The corporation will announce shoppers in the fourth quarter.

/cdn.vox-cdn.com/uploads/chorus_asset/file/25280216/Screenshot_2024_02_11_at_11.34.11_AM.png)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25408401/1727318537.jpg)