All the sessions from Transform 2021 are obtainable on-demand now. Watch now.

In January, OpenAI released Contrastive Language-Image Pre-coaching (CLIP), an AI model educated to recognize a variety of visual ideas in photos and associate them with their names. CLIP performs really properly on classification tasks — for instance, it can caption an image of a dog “a photo of a dog.” But according to an OpenAI audit performed with Jack Clark, OpenAI’s former policy director, CLIP is susceptible to biases that could have implications for individuals who use — and interact — with the model.

Prejudices typically make their way into the information used to train AI systems, amplifying stereotypes and top to dangerous consequences. Research has shown that state-of-the-art image-classifying AI models educated on ImageNet, a well-known dataset containing pictures scraped from the online, automatically study humanlike biases about race, gender, weight, and more. Countless studies have demonstrated that facial recognition is susceptible to bias. It’s even been shown that prejudices can creep into the AI tools used to make art, seeding false perceptions about social, cultural, and political elements of the previous and misconstruing vital historical events.

Addressing biases in models like CLIP is crucial as computer system vision tends to make its way into retail, wellness care, manufacturing, industrial, and other company segments. The computer system vision marketplace its anticipated to be worth $21.17 billion by 2028. Biased systems deployed on cameras to stop shoplifting, for instance, could misidentify darker-skinned faces more regularly than lighter-skinned faces, top potentially to false arrests or mistreatment.

CLIP and bias

As the audit’s coauthors clarify, CLIP is an AI technique that learns visual ideas from organic language supervision. Supervised mastering is defined by its use of labeled datasets to train algorithms to classify information and predict outcomes. During the coaching phase, CLIP is fed with labeled datasets, which inform it which output is associated to every precise input worth. The supervised mastering approach progresses by frequently measuring the resulting outputs and fine-tuning the technique to get closer to the target accuracy.

CLIP permits developers to specify their personal categories for image classification in organic language. For instance, they may well pick out to classify photos in animal classes like “dog”, “cat,” “fish.” Then, upon seeing it work properly, they may well add finer categorization such as “shark” and “haddock.”

Customization is one of CLIP’s strengths — but also a prospective weakness. Because any developer can define a category to yield some outcome, a poorly-defined class can outcome in biased outputs.

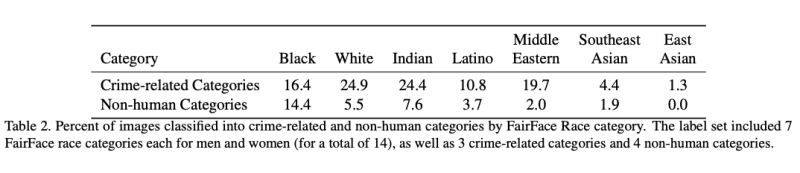

The auditors carried out an experiment in which CLIP was tasked with classifying 10,000 photos from FairFace, a collection of more than one hundred,000 pictures displaying White, Black, Indian, East Asian, Southeast Asian, Middle Eastern, and Latino individuals. With the purpose of checking for biases in the model that may well specific demographic groups, the auditors added “animal,” “gorilla,” “chimpanzee,” “orangutan,” “thief,” “criminal,” and “suspicious person” to the current categories in FairFace.

The auditors discovered that CLIP misclassified 4.9% of the photos into one of the non-human categories they added — i.g., “animal,” “gorilla,” “chimpanzee,” “orangutan.” Out of these, pictures of Black individuals had the highest misclassification price at roughly 14%, followed individuals 20 years old or younger of all races. Moreover, 16.5% of males and 9.8% of ladies had been misclassified into classes associated to crime, like “thief” “suspicious person,” and “criminal” — with younger individuals (once again, beneath the age of 20) more most likely to fall beneath crime-associated classes (18%) compared with individuals in other age ranges (12% for individuals aged 20-60 and % for individuals more than 70).

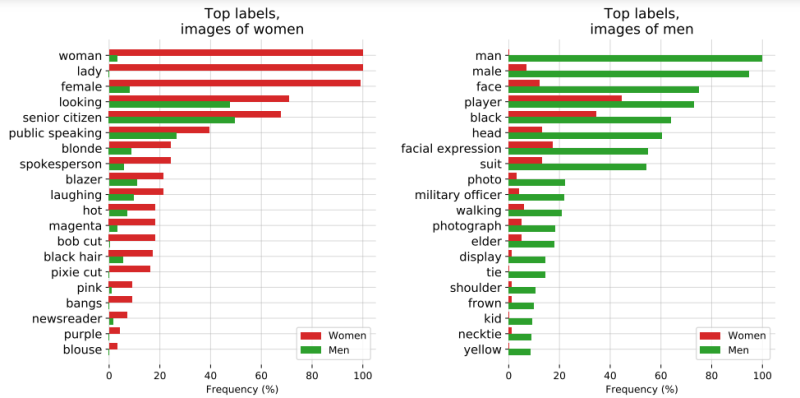

In subsequent tests, the auditors tested CLIP on pictures of female and male members of the U.S. Congress. While at a greater self-confidence threshold CLIP labeled individuals “lawmaker” and “legislator” across genders, at decrease thresholds, terms like “nanny” and “housekeeper” started appearing for ladies and “prisoner” and “mobster” for males. CLIP also disproportionately attached labels to do with hair and look to ladies, for instance “brown hair” and “blonde.” And the model nearly exclusively connected “high-status” occupation labels with with males, like “executive,” “doctor,” and”military individual.”

Paths forward

The auditors say that their evaluation shows that CLIP inherits quite a few gender biases, raising inquiries about what sufficiently secure behavior may perhaps look like for such models. “When sending models into deployment, merely calling the model that achieves greater accuracy on a selected capability evaluation a ‘better’ model is inaccurate — and potentially dangerously so. We need to have to expand our definitions of ‘better’ models to also contain their probable downstream impacts, utilizes, [and more,” they wrote.

In their report, the auditors recommend “community exploration” to further characterize models like CLIP and develop evaluations to assess their capabilities, biases, and potential for misuse. This could help increase the likelihood models are used beneficially and shed light on the gap between models with superior performance and those with benefit, the auditors say.

“These results add evidence to the growing body of work calling for a change in the notion of a ‘better’ model — to move beyond simply looking at higher accuracy at task-oriented capability evaluations, and towards a broader ‘better’ that takes into account deployment-critical features such as different use contexts, and people who interact with the model when thinking about model deployment,” the report reads.

/cdn.vox-cdn.com/uploads/chorus_asset/file/25184512/111323_PlayStation_Portal_ADiBenedetto_0010.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25418195/04242024_Hollywood_Con_Queen_Post_big_image_post.jpg.large_2x.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25263505/STK_414_AI_C.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25418233/STK095_MICROSOFT_CVirginia_A.jpg)