The Transform Technology Summits start out October 13th with Low-Code/No Code: Enabling Enterprise Agility. Register now!

Machine understanding has grow to be an critical element of quite a few applications we use today. And adding machine understanding capabilities to applications is becoming increasingly simple. Many ML libraries and on the internet services do not even call for a thorough know-how of machine understanding.

However, even simple-to-use machine understanding systems come with their personal challenges. Among them is the threat of adversarial attacks, which has grow to be one of the critical issues of ML applications.

Adversarial attacks are different from other sorts of safety threats that programmers are used to dealing with. Therefore, the 1st step to countering them is to realize the distinctive sorts of adversarial attacks and the weak spots of the machine understanding pipeline.

In this post, I will attempt to provide a zoomed-out view of the adversarial attack and defense landscape with aid from a video by Pin-Yu Chen, AI researcher at IBM. Hopefully, this can aid programmers and solution managers who do not have a technical background in machine understanding get a much better grasp of how they can spot threats and guard their ML-powered applications.

1- Know the distinction amongst application bugs and adversarial attacks

Software bugs are nicely-identified amongst developers, and we have lots of tools to obtain and repair them. Static and dynamic evaluation tools obtain safety bugs. Compilers can obtain and flag deprecated and potentially dangerous code use. Test units can make sure functions respond to distinctive sorts of input. Anti-malware and other endpoint options can obtain and block malicious applications and scripts in the browser and the laptop tough drive. Web application firewalls can scan and block dangerous requests to internet servers, such as SQL injection commands and some sorts of DDoS attacks. Code and app hosting platforms such as GitHub, Google Play, and Apple App Store have lots of behind-the-scenes processes and tools that vet applications for safety.

In a nutshell, despite the fact that imperfect, the classic cybersecurity landscape has matured to deal with distinctive threats.

But the nature of attacks against machine understanding and deep understanding systems is distinctive from other cyber threats. Adversarial attacks bank on the complexity of deep neural networks and their statistical nature to obtain techniques to exploit them and modify their behavior. You can not detect adversarial vulnerabilities with the classic tools used to harden application against cyber threats.

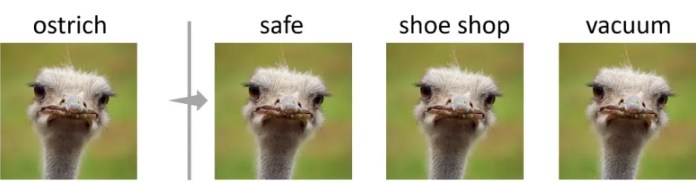

In current years, adversarial examples have caught the interest of tech and enterprise reporters. You’ve in all probability seen some of the quite a few articles that show how machine understanding models mislabel photos that have been manipulated in techniques that are imperceptible to the human eye.

While most examples show attacks against image classification machine understanding systems, other sorts of media can also be manipulated with adversarial examples, including text and audio.

“It is a kind of universal risk and concern when we are talking about deep learning technology in general,” Chen says.

One misconception about adversarial attacks is that it impacts ML models that carry out poorly on their major tasks. But experiments by Chen and his colleagues show that, in basic, models that carry out their tasks more accurately are much less robust against adversarial attacks.

“One trend we observe is that more accurate models seem to be more sensitive to adversarial perturbations, and that creates an undesirable tradeoff between accuracy and robustness,” he says.

Ideally, we want our models to be each correct and robust against adversarial attacks.

2- Know the effect of adversarial attacks

In adversarial attacks, context matters. With deep understanding capable of performing difficult tasks in computer vision and other fields, they are gradually getting their way into sensitive domains such as healthcare, finance, and autonomous driving.

But adversarial attacks show that the choice-generating approach of deep understanding and humans are fundamentally distinctive. In security-crucial domains, adversarial attacks can bring about danger to the life and wellness of the individuals who will be straight or indirectly working with the machine understanding models. In places like finance and recruitment, it can deprive individuals of their rights and bring about reputational harm to the enterprise that runs the models. In safety systems, attackers can game the models to bypass facial recognition and other ML-based authentication systems.

Overall, adversarial attacks bring about a trust challenge with machine understanding algorithms, particularly deep neural networks. Many organizations are reluctant to use them due to the unpredictable nature of the errors and attacks that can occur.

If you are preparing to use any sort of machine understanding, believe about the effect that adversarial attacks can have on the function and choices that your application tends to make. In some circumstances, using a reduce-performing but predictable ML model might be much better than one that can be manipulated by adversarial attacks.

3- Know the threats to ML models

The term adversarial attack is usually utilized loosely to refer to distinctive sorts of malicious activity against machine understanding models. But adversarial attacks differ based on what portion of the machine understanding pipeline they target and the type of activity they involve.

Basically, we can divide the machine understanding pipeline into the “training phase” and “test phase.” During the education phase, the ML group gathers information, selects an ML architecture, and trains a model. In the test phase, the educated model is evaluated on examples it hasn’t seen ahead of. If it performs on par with the preferred criteria, then it is deployed for production.

Adversarial attacks that are exceptional to the education phase consist of information poisoning and backdoors. In data poisoning attacks, the attacker inserts manipulated information into the education dataset. During education, the model tunes its parameters on the poisoned information and becomes sensitive to the adversarial perturbations they include. A poisoned model will have erratic behavior at inference time. Backdoor attacks are a unique form of information poisoning, in which the adversary implants visual patterns in the education information. After education, the attacker makes use of these patterns in the course of inference time to trigger particular behavior in the target ML model.

Test phase or “inference time” attacks are the sorts of attacks that target the model immediately after education. The most well known form is “model evasion,” which is essentially the common adversarial examples that have grow to be well known. An attacker creates an adversarial instance by beginning with a standard input (e.g., an image) and steadily adding noise to it to skew the target model’s output toward the preferred outcome (e.g., a particular output class or basic loss of self-confidence).

Another class of inference-time attacks tries to extract sensitive info from the target model. For instance, membership inference attacks use distinctive approaches to trick the target ML model to reveal its education information. If the education information integrated sensitive info such as credit card numbers or passwords, these sorts of attacks can be quite damaging.

Another critical aspect in machine understanding safety is model visibility. When you use a machine understanding model that is published on the internet, say on GitHub, you are working with a “white box” model. Everyone else can see the model’s architecture and parameters, which includes attackers. Having direct access to the model will make it less complicated for the attacker to build adversarial examples.

When your machine understanding model is accessed by means of an on the internet API such as Amazon Recognition, Google Cloud Vision, or some other server, you are working with a “black box” model. Black-box ML is tougher to attack since the attacker only has access to the output of the model. But tougher does not imply not possible. It is worth noting there are several model-agnostic adversarial attacks that apply to black-box ML models.

4- Know what to look for

What does this all imply for you as a developer or solution manager? “Adversarial robustness for machine learning really differentiates itself from traditional security problems,” Chen says.

The safety neighborhood is steadily establishing tools to create more robust ML models. But there’s nevertheless a lot of work to be carried out. And for the moment, your due diligence will be a quite critical aspect in safeguarding your ML-powered applications against adversarial attacks.

Here are a handful of queries you need to ask when thinking of working with machine understanding models in your applications:

Where does the education information come from? Images, audio, and text files could appear innocuous per se. But they can hide malicious patterns that can poison the deep understanding model that will be educated by them. If you are working with a public dataset, make sure the information comes from a reputable supply, possibly vetted by a identified enterprise or an academic institution. Datasets that have been referenced and utilized in numerous analysis projects and applied machine understanding applications have greater integrity than datasets with unknown histories.

What type of information are you education your model on? If you are working with your personal information to train your machine understanding model, does it consist of sensitive info? Even if you are not generating the education information public, membership inference attacks could allow attackers to uncover your model’s secrets. Therefore, even if you are the sole owner of the education information, you need to take added measures to anonymize the education information and guard the info against prospective attacks on the model.

Who is the model’s developer? The distinction amongst a harmless deep understanding model and a malicious one is not in the supply code but in the millions of numerical parameters they comprise. Therefore, classic safety tools can not inform you irrespective of whether if a model has been poisoned or if it is vulnerable to adversarial attacks. So, do not just download some random ML model from GitHub or PyTorch Hub and integrate it into your application. Check the integrity of the model’s publisher. For instance, if it comes from a renowned analysis lab or a enterprise that has skin in the game, then there’s tiny likelihood that the model has been intentionally poisoned or adversarially compromised (even though the model could nevertheless have unintentional adversarial vulnerabilities).

Who else has access to the model? If you are working with an open-supply and publicly out there ML model in your application, then you need to assume that prospective attackers have access to the very same model. They can deploy it on their personal machine and test it for adversarial vulnerabilities, and launch adversarial attacks on any other application that makes use of the very same model out of the box. Even if you are working with a industrial API, you need to take into consideration that attackers can use the precise very same API to create an adversarial model (even though the charges are greater than white-box models). You need to set your defenses to account for such malicious behavior. Sometimes, adding easy measures such as operating input photos by means of various scaling and encoding actions can have a fantastic effect on neutralizing prospective adversarial perturbations.

Who has access to your pipeline? If you are deploying your personal server to run machine understanding inferences, take fantastic care to guard your pipeline. Make sure your education information and model backend are only accessible by individuals who are involved in the development approach. If you are working with education information from external sources (e.g., user-supplied photos, comments, reviews, and so on.), establish processes to avert malicious information from getting into the education/deployment approach. Just as you sanitize user information in internet applications, you need to also sanitize information that goes into the retraining of your model. As I’ve described ahead of, detecting adversarial tampering on information and model parameters is quite challenging. Therefore, you need to make sure to detect modifications to your information and model. If you are consistently updating and retraining your models, use a versioning method to roll back the model to a earlier state if you obtain out that it has been compromised.

5- Know the tools

Earlier this year, AI researchers at 13 organizations, which includes Microsoft, IBM, Nvidia, and MITRE, jointly published the Adversarial ML Threat Matrix, a framework meant to aid developers detect probable points of compromise in the machine understanding pipeline. The ML Threat Matrix is critical since it does not only focus on the safety of the machine understanding model but on all the elements that comprise your method, which includes servers, sensors, internet websites, and so on.

The AI Incident Database is a crowdsourced bank of events in which machine understanding systems have gone incorrect. It can aid you discover about the probable techniques your method could fail or be exploited.

Big tech organizations have also released tools to harden machine understanding models against adversarial attacks. IBM’s Adversarial Robustness Toolbox is an open-supply Python library that supplies a set of functions to evaluate ML models against distinctive sorts of attacks. Microsoft’s Counterfit is a different open-supply tool that checks machine understanding models for adversarial vulnerabilities.

Machine understanding needs new perspectives on safety. We need to discover to adjust our application development practices according to the emerging threats of deep understanding as it becomes an increasingly critical portion of our applications. Hopefully, these strategies will aid you much better realize the safety considerations of machine understanding.

Ben Dickson is a application engineer and the founder of TechTalks. He writes about technologies, enterprise, and politics.

/cdn.vox-cdn.com/uploads/chorus_asset/file/22977156/acastro_211101_1777_meta_0002.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/11742007/acastro_1800724_1777_EU_0001.jpg)